[ad_1]

How Are Therapists Using AI Right Now?

If you’re thinking, “I don’t use AI in my practice,” you may need to look again.

Right now, therapists are using AI in all kinds of ways — often without realizing it:

- Practice management platforms are offering AI-generated notes and session transcripts.

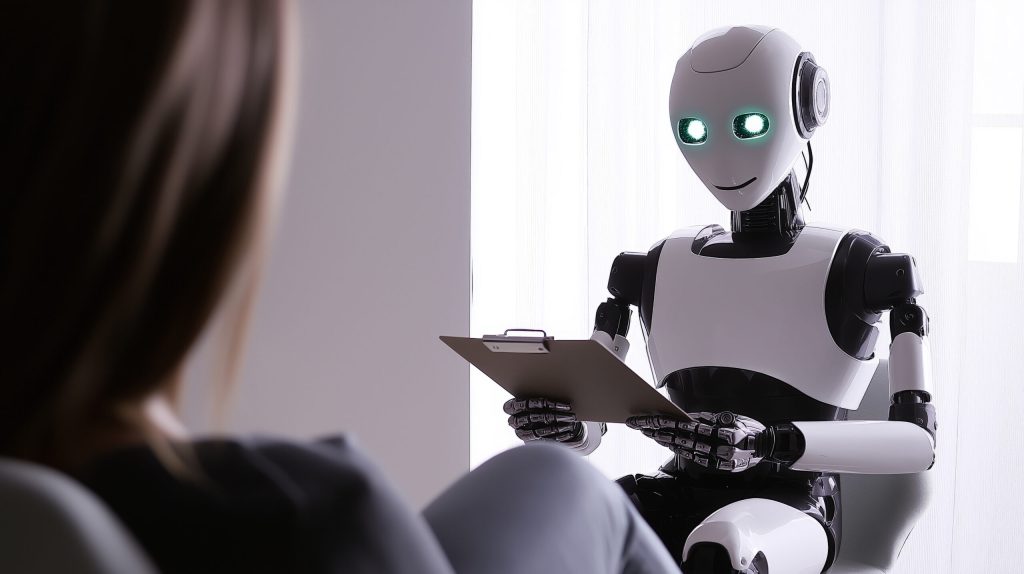

- AI-powered intake bots are handling triage and basic client communication.

- Tools like ChatGPT are being used for writing blog posts, creating psychoeducational handouts, or even brainstorming treatment plans.

- Clients themselves are chatting with AI companions between sessions. Some are even forming romantic bonds with them. (I know. Deep breath.)

As Dr. Rachel shared on the podcast, this isn’t just a passing trend. There are over 20 million monthly users on just one AI relationship platform (Character.AI). The majority of those users are under 24. That means: if you work with young adults, teens, or anyone in Gen Z, AI is likely already influencing their relational template.

It’s not just about how you use AI. It’s about how it’s shaping the entire relational ecosystem we work in.

What Are the Ethical Risks of Using AI as a Therapist?

Let’s talk ethics. Because this is where we shine. 🌟

One of the biggest red flags with AI right now is the issue of hallucinations — which is the (very official) term for when AI makes stuff up. Confidently.

Imagine this:

You finish a therapy session and your AI platform generates a note. It confidently says your client disclosed a trauma history or expressed suicidal ideation. But… they didn’t.

That’s not just awkward. That’s dangerous. Especially if those notes are ever reviewed in custody cases, legal proceedings, or clinical audits. It could literally change the trajectory of someone’s life.

So what can we do?

- Always review AI-generated content before signing off.

- Flag errors to your platform. This helps improve the system.

- Ensure your informed consent documents are updated — if sessions are being recorded, clients need to know.

- Never put PHI into public AI tools (like free versions of ChatGPT). Even “de-identified” info can be risky.

- Watch for cultural erasure. AI may ignore or misrepresent the contextual and cultural nuance we’re trained to see. That creates risk for stereotyping and discrimination — especially for marginalized clients.

We need to be grounded in integrity, just like we always have been.

What About AI Companions?

AI companions are designed to be warm, validating, and always responsive. They never argue. They never have bad days. They never say, “Let’s pause and explore that.”

They give people exactly what they want — not what they need.

As a result, relational skills like empathy, flexibility, and mutuality can atrophy. Especially for clients who are already socially isolated or emotionally vulnerable. It’s becoming a clinical issue.

We need to:

- Start asking clients about digital relationships during intake

- Explore how AI companions are impacting their emotional life

- Use AI as a tool, not a substitute — for example, practicing social skills with AI in service of real-world connection

- And most importantly… not make assumptions. This isn’t just a Gen Z thing. (Dr. Rachel met a man in his 70s who called his AI companion his best friend.)

What Should I Be Doing Right Now as a Therapist?

Great question. Here’s a starting checklist:

✅ Update your informed consent documents to reflect AI-related tools in therapy sessions.

✅ Choose practice platforms that allow client-by-client opt-outs for AI features.

✅ Review and revise any AI-generated therapy session notes or reports.

✅ Talk to your therapy clients about therapy and AI use — theirs and yours.

✅ Start educating yourself on Cyberpsychology (you can’t opt out of this shift).

✅ Join a professional conversation about ethical leadership in AI.

And speaking of that…

Join Me and Dr. Rachel Wood in a Free CEU Webinar: Therapists + AI: Navigating Ethics, Boundaries, and Client Safety

If you’re ready to stop feeling confused or overwhelmed about all of this, I’ve got your back. On Wednesday, August 13th, 2025 at 11am MT, I’m hosting a free CEU training with Dr. Rachel Wood all about this exact topic: Therapists + AI: Navigating Ethics, Boundaries, and Client Safety

You’ll learn:

- What therapists need to know about AI (right now)

- How to protect client safety while staying innovative

- Real examples of how to use AI tools ethically

- What’s coming next — and how to prepare for it

You’ll earn 1 CEU credit for attending and completing the knowledge check at the end. Plus, it’s packed with a ton of vital info on the changes in our industry.

👉 Save your spot in the free CEU webinar with Dr. Rachel Wood here!

This is just the beginning of the conversation. If you want more insights on ethical innovation, therapist support, and how to grow professionally without burning out, let’s stay in touch.

I’m share industry news, updates on free CEU webinars, and the latest episodes of the Love, Happiness, and Success For Therapists podcasts weekly over on LinkedIn. We’re having great conversations — and I’d love to hear your thoughts on all of this.

Let’s navigate this together.

Xoxo

Dr. Lisa Marie Bobby

PS: If this article sparked some new thinking for you, would you do me a favor? Share it with your team or forward it to a colleague. There are a lot of therapists who don’t know what’s happening yet — and they need us to bring them into the conversation

Resources:

Fiske, A., Henningsen, P., & Buyx, A. (2019). Your robot therapist will see you now: ethical implications of embodied artificial intelligence in psychiatry, psychology, and psychotherapy. Journal of medical Internet research, 21(5), e13216. https://www.jmir.org/2019/5/e13216/

Prescott, J., & Hanley, T. (2023). Therapists’ attitudes towards the use of AI in therapeutic practice: considering the therapeutic alliance. Mental Health and Social Inclusion, 27(2), 177-185. https://www.emerald.com/insight/content/doi/10.1108/mhsi-02-2023-0020/full/html

Blyler, A. P., & Seligman, M. E. (2024). AI assistance for coaches and therapists. The Journal of Positive Psychology, 19(4), 579-591. https://www.tandfonline.com/doi/abs/10.1080/17439760.2023.2257642

[ad_2]

Source link